What Is AI Governance?

AI governance is the set of policies, controls, and oversight mechanisms an organization uses to manage how AI models behave, what they can access, and what they're permitted to do. In early AI deployments, that largely meant acceptable use policies and output monitoring. As enterprises move from AI assistants to autonomous agents that take actions across connected systems, governance has had to expand to cover the full scope of what those agents can do.

This is qualitatively different from governing what chatbots can say.

Why AI Governance Is Getting More Difficult

For most of AI's enterprise history, the governance question was about outputs. Security teams needed to know what the system said, and whether it was accurate and/or appropriate.

Agentic AI makes it about consequences. Agents can query a database, trigger a workflow, call an external API, and update a record in a single uninterrupted sequence.

As a result, the stakes of a governance failure are categorically different.

The policies, controls, and oversight mechanisms enterprises built for generative AI weren't designed for systems that act. And the pace of agentic deployment has moved faster than most security and governance teams have been able to follow. Agents are being built across code repositories, cloud platforms, and low-code environments simultaneously.

A lot of that is happening without a complete picture of what's running, and what it can do.

AI Governance Challenges in Enterprise Environments

AI Agents Are Non-Deterministic.

Legacy application security was built around predictable behavior. SQL injection follows patterns. Cross-site scripting leaves fingerprints. So defenders wrote rules around those scenarios, and the rules held.

Agentic AI doesn't work that way, because the same agent can behave differently depending on conversation history, tool state, or model version. Security teams are now being asked to defend systems that don't follow the rules they were trained to recognize, while development continues at a pace that leaves little room to catch up. The foundational challenge in AI governance isn't tooling. It's that the threat model itself has changed.

Enterprises Can't Govern What They Can't Inventory

Teams are building agents across code repositories and cloud platforms simultaneously. They connect to different models, different databases, different external APIs (and those connections shift). An agent running on one model today may be running on a newer version tomorrow without a single line of code changing.

Most enterprises don't have a complete picture of which agents are live, or what access they've been granted. You can't prioritize risk you haven't mapped, and you can't enforce policy on infrastructure you don't know exists.

Agents Do Much More Than Generate Text

A customer support chatbot that answers questions presents one kind of risk. But an entirely different category of risk emerges from support agents that can process refunds or call external APIs.

When an AI can act autonomously, the consequences of a security failure extend well beyond a bad output. The prompt-and-response model of AI security, which evaluates inputs and outputs at the conversation layer, was built for a world where generation was the endpoint. But in agentic environments, generation is just the beginning.

Fragile Intent Is Impossible to Find Without High-Agency Testing

Every agent has a point at which its guardrails can be overcome. That point usually comes through persistence rather than through brute force.

A single prompt asking an agent to do something it shouldn't will usually fail. But a sustained, adaptive conversation that gradually reframes the request is far more likely to succeed. This problem is related to the non-deterministic nature of AI models: there is no complete list of all the ways a specific agent can succumb to manipulation.

To counter that, it’s vital to find where the agent's intent is fragile, so that intent can be hardened. That challenge becomes especially acute in the kinds of agentic and copilot deployments where agents operate with broad access and real authority.

AI Governance Challenges in Agentic and Copilot Environments

Agents Can Act Across Systems Without Meaningful Boundaries

An agent connected to your CRM, a communication platform, and a customer database is effectively a pathway. It connects systems that were never designed to be connected through a single automated actor.

When that agent executes an action, it moves across system boundaries that would otherwise require separate authentication and separate human decisions. In many enterprise deployments today, those boundaries don't exist at the agent layer. The agent inherits access, acts on it, and the individual systems it touches have no visibility into what authorized the action or whether it fell within intended scope.

Agent-to-Agent Interactions Create Blind Spots That Traditional Monitoring Can't See

Increasingly, agents orchestrate other agents. They pass instructions, delegate subtasks, or chain outputs into inputs across multiple models and tools. The security problem here is structural:

- Monitoring built around human-initiated activity has no frame of reference for an agent receiving instructions from another agent.

- There's no reliable way to detect when an instruction chain has been compromised mid-flight.

- The blast radius of a single compromised orchestration step can extend across every downstream agent in the chain.

The interactions are invisible to conventional tools, the actors are non-human, and the consequences compound quickly.

Copilots and Plugins Inherit More Access Than They Need

Copilots get deployed quickly. That's part of the value proposition: connect to your existing tools and start working. In practice, it also means access is granted at deployment based on what the copilot might need, not what it actually uses. Plugins extend that surface further. The pattern looks the same across most enterprise deployments:

- Permissions scoped to what the copilot could need, not what it does need

- Plugin connections added over time, each expanding the attack surface

- Access rarely audited after initial setup, and almost never revoked

Least privilege is a foundational security principle. In most copilot deployments, it isn't applied.

Autonomous Execution Moves Faster Than Human Oversight Can Follow

Human review works at human speed, and agentic systems don't wait. An autonomous agent completing a multi-step task can move through that sequence in seconds. By the time a reviewer sees what happened, the actions are already complete.

The problem here is structural. Even with the best of intentions, a governance framework built around human approval cannot keep up with systems that execute continuously and on their own judgment. Agents produce consequences faster than any review process can intercept them.

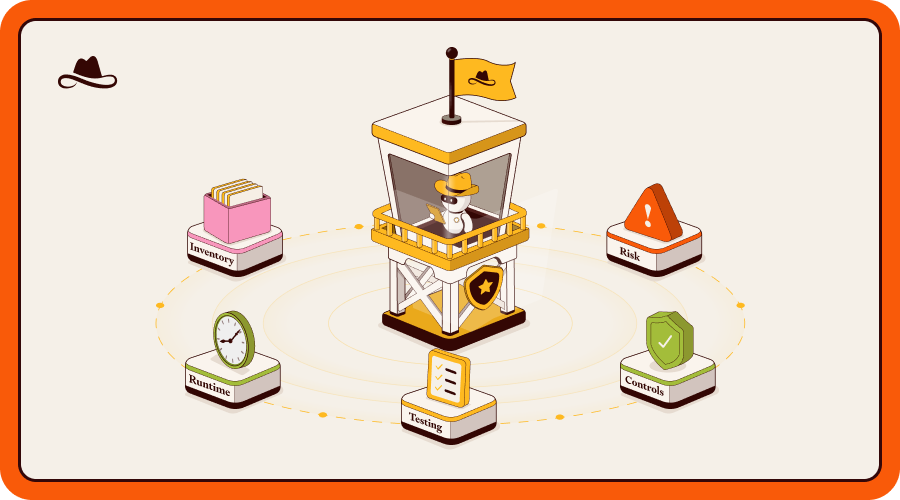

Five Capabilities That Make AI Governance Real

Building an Effective AI Governance Framework

Governing agentic AI has to be a continuous operational discipline that moves fast enough to keep pace with rapid change. A framework that works in practice covers four things:

Discover every agent and AI application across code and cloud

Establish a live inventory that captures every agent across repositories, cloud platforms, and third-party services, along with the model it runs, the tools it connects to, and what it can access. If an agent changes without a code commit, that change needs to be visible.

Assess risk and prioritize by what each agent can actually do

Not all agents carry equal risk. An external-facing agent connected to a payment system and a customer database needs different prioritization than an internal tool with limited scope. Risk assessment should reflect the actual blast radius of each agent.

Test continuously for fragile intent across the full attack surface

Single-turn testing against known attack patterns covers part of the surface. It doesn't cover the multi-turn, adaptive conversations that find where an agent's intent breaks down, or the very application-specific scenarios that probe what happens when an agent connected to real systems gets pushed toward a goal it was never meant to serve. Testing needs to match how agentic systems are actually attacked.

Enforce adaptive policies at runtime and monitor agent activity beyond the conversation layer

Policies built from real testing findings are more effective than generic guardrails applied at deployment. Runtime enforcement needs to operate across the full scope of agent activity, not just at the input and output boundary. And when something changes, the policies need to change with it.

The Cost of Getting AI Governance Wrong

Financial and Operational Impact

What makes agentic AI failures particularly costly is their blast radius. An agent with broad system access that gets compromised can execute actions across connected systems before anyone notices. Once they do notice, the cleanup spans multiple tools, workflows, and business processes simultaneously. Visibility before an incident is what separates a contained event from an expensive one.

Legal and Regulatory Exposure

The regulatory surface for enterprises deploying agentic AI now spans multiple frameworks at once. The EU AI Act applies in full by August 2026, with fines reaching €35 million or 7% of global revenue for non-compliance. GDPR remains a parallel exposure for any system processing personal data. NIST AI RMF and ISO 42001 set the governance expectations that enterprise and government customers increasingly require from their vendors.

Reputational Damage

With chatbots, a failure is visible immediately, once a bad response surfaces and goes public. With agentic AI, the failure pattern is different. Agent mistakes can cascade through business processes before anyone notices. By the time the damage is visible, multiple systems have been affected and the audit trail is often incomplete.

That's the reputational problem that's specific to agentic deployments: it's not just that something went wrong, it's that the organization can't clearly explain why it happened, or what they've done to prevent it. In PwC's AI Agent Survey, only 20% of leaders said they trust AI agents for financial transactions, and just 22% for autonomous employee interactions. Trust in agentic AI is already fragile, so a governance failure validates skepticism about agents, and the company that deployed them without adequate security.

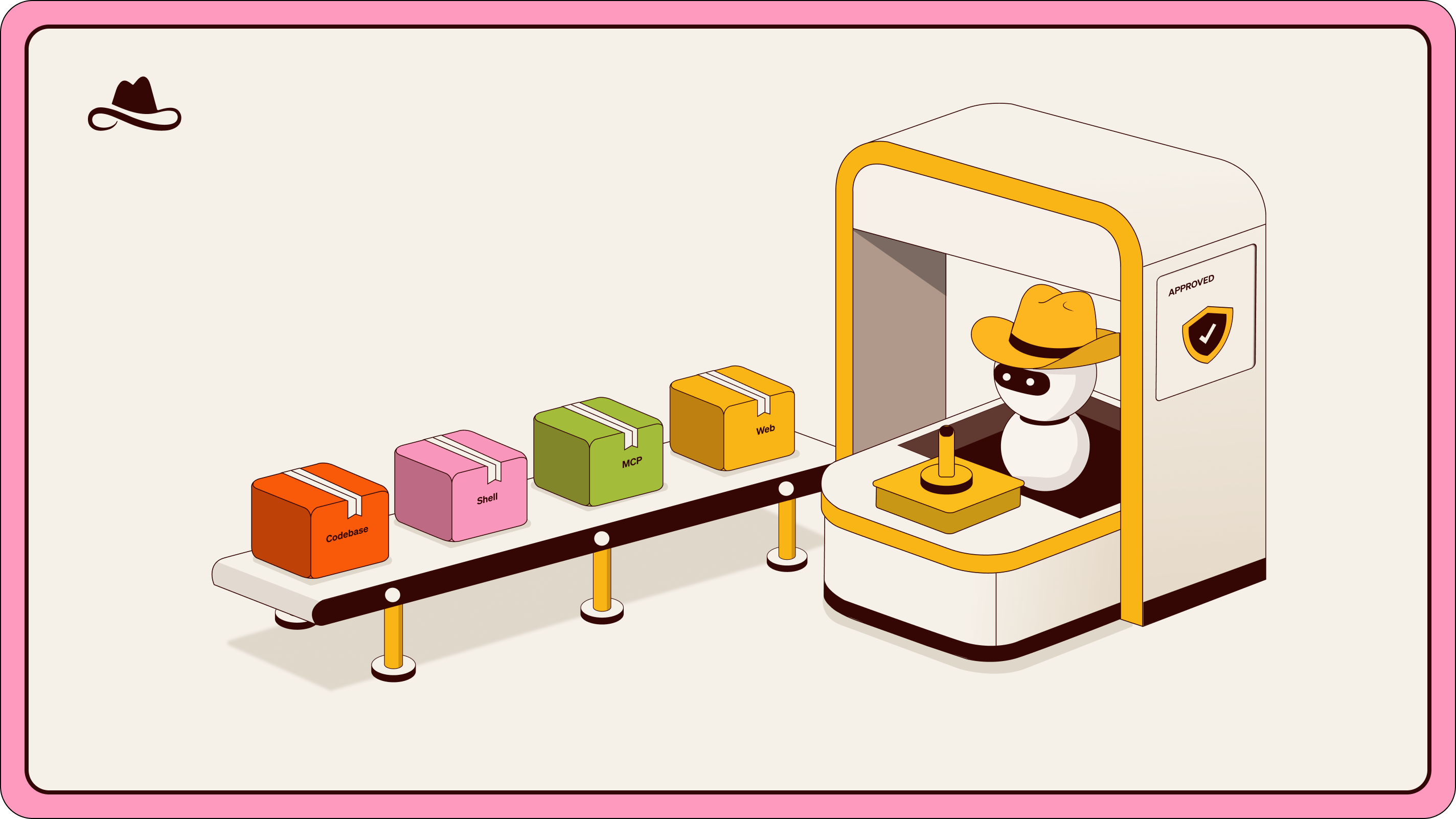

How Lasso Closes the AI Governance Loop

Effective AI governance in agentic environments requires a continuous cycle of discovery, assessment, and enforcement that keeps pace with systems that are themselves constantly changing. Lasso is built around that cycle:

- Discover: Lasso connects to code repositories and cloud platforms to build a live inventory of every agent and AI application in the environment: the model it runs, the tools it connects to, the APIs it calls, and the system prompt it operates under. It updates as agents evolve, capturing changes that happen without a single line of code being written.

- Assess: Lasso's red teaming probes each agent across three levels of depth: single-turn testing against a continuously updated library of adversarial payloads, multi-turn testing through offensive agents designed to find fragile intent through sustained conversation, and bespoke attack scenarios tailored to the specific tools, workflows, and access permissions of the target application.

- Protect: Where vulnerabilities are found, Lasso builds adaptive guardrails automatically, turning red team findings directly into runtime policy. That runtime layer monitors agent activity continuously across tools, memory, and decision points. Threats are detected as they unfold.

The loop between testing and enforcement is what makes governance possible for systems that don't stand still.

Conclusion

Agentic AI is already making decisions with real business consequences. And the environments it operates in are too dynamic and interconnected for static governance frameworks to keep up. The risks covered in this article aren't future risks. They're present in any enterprise that has deployed agents without the testing and enforcement infrastructure to govern them continuously.

Getting that infrastructure in place starts with understanding what your agents are actually doing. Lasso makes that possible, from the first line of code to runtime.

FAQs

What are the biggest agentic AI governance challenges for enterprises?

Beyond the foundational unpredictability, the practical challenges include:

- Maintaining a complete inventory of agents across code and cloud environments,

- governing systems that take real actions across connected tools and APIs,

- and keeping testing and enforcement current as agents change continuously, often without any code being written.

Over and above all of this, there is the complex problem of intent. Intent security is the hardest governance challenge of all. Unlike permissions, policies, or access controls, intent cannot be statically defined, enumerated, or enforced in advance. It has to be continuously discovered.

How do enterprises maintain visibility into agentic AI?

Visibility in agentic environments requires more than usage logs or approved tool lists. It means knowing every agent in your environment and keeping that picture current as agents evolve:

- The model it runs.

- All tools it connects to.

- Any and all APIs it calls.

- The contents of its system prompt.

That requires connecting directly to code repositories and cloud platforms to build a live inventory, rather than relying on point-in-time audits that describe a system that may already have changed.

How does Lasso improve AI governance visibility?

Lasso builds a continuously updated inventory of every agent and AI application across code and cloud, mapping dependencies, model versions, tool connections, and system prompts in a single view. From there, automated red teaming assesses each agent's risk profile and identifies vulnerabilities (including fragile intent) before they're exploited.

What is fragile intent and why does it matter for AI security?

Fragile intent refers to the specific conditions under which an attacker can persuade an agent to pursue the wrong goal. A single prompt asking an agent to do something outside its intended scope will usually fail. But a sustained, adaptive conversation that gradually reframes the request is a different story.

Because agentic AI is non-deterministic, there is no complete list of all the ways a given agent can be manipulated. That means the goal of security testing isn't exhaustive coverage, it's finding and hardening the points where intent breaks down.

How do enterprises govern autonomous AI agents?

Governing autonomous agents requires addressing the full scope of what they do. That means maintaining a live inventory of every agent and its dependencies, testing each agent's behavior under adversarial conditions including multi-turn and goal-hijacking scenarios, enforcing policies that reflect actual agent activity rather than generic input/output rules, and monitoring agent actions continuously across tools and decision points at runtime. Governance that only operates at the conversation layer misses most of the risk surface in agentic environments.

.avif)

.png)