What Is Agentic AI?

Most enterprise AI deployments started with a chatbot, a copilot, or a model answering questions inside a defined interface. Agentic AI is a different category. These systems plan, decide, and act, often without a human reviewing each step.

The same prompt can produce different actions depending on what the agent remembers, what tools it has access to, and what it has already done in the current session. That non-determinism is the point. It's also what makes agentic AI fundamentally harder to secure than anything that came before it.

Why Agentic AI Best Practices Matter for Enterprises

Agents are being built across the enterprise simultaneously:

- In code by engineering teams.

- On cloud platforms by operations.

- Through low-code builders by business units that have never had a security review conversation.

Each one is a non-human identity operating inside your environment with tool access, memory, and the ability to take actions that can't always be undone.

Legacy security models were built around applications that behave predictably. An agent connected to a payment system, a customer database, and the internet, running on a model that may have been silently upgraded overnight, is not that.

Securing it requires visibility into what it is doing, right now, and the ability to raise a flag when it starts doing things you didn’t think it would.

Top Agentic AI Best Practices for 2026

A global survey found that 97% of security leaders expect a material AI-agent-driven security incident this year, with only 6% of security budgets currently allocated to this risk.

These best practices form the baseline of defense for a world where the tech has outrun the controls.

1. Define Clear Boundaries for Agent Tasks and Actions

Agentic AI tools fail dangerously when they operate without explicit task scope. Security teams should define agent task boundaries in policy, not just in prompts. That means specifying which tools an agent can invoke, which data sources it can access, which systems it can write to, and under what conditions it should halt and escalate.

Boundaries enforced at the infrastructure and authorization layer are far more reliable than boundaries encoded in natural language instructions, which can be manipulated or misinterpreted.

2. Apply Least-Privilege Access to Tools and Data

Agents inherit the permissions of the identities they operate under, which can be over-provisioned. An agent that can read, write, call external APIs, and execute code under a single broadly-scoped identity is a fully-loaded attack vector in itself.

The access model needs to reflect how agents actually work: discrete tasks, specific tools, bounded data. In practice, this means:

- Dedicated service identities per agent role, not shared credentials across workflows.

- Tool access scoped to allowlists: agents should not see capabilities they have no business using.

- Time-bounded tokens for sensitive operations, expiring when the task does.

Persistent, over-scoped credentials are the condition that turns a successful prompt injection into a serious breach. Least privilege is one of the first lines of defense you have.

3. Monitor Agent Behavior and Outputs Continuously

Standard application monitoring does not translate cleanly to agentic systems. Agents make sequences of decisions drawing on runtime context, so a single log line rarely tells you what actually happened.

Effective monitoring requires tracing the full reasoning chain: which tools were called, in what order, with what inputs, and what the agent's stated rationale was at each step. Behavioral baselines per agent role are essential. Anomalies to flag include:

- Unexpected tool usage or access outside normal task scope

- Instruction sequences that deviate from established patterns

- Elevated output volume with no corresponding task trigger

Without this visibility, detection of compromise or manipulation is largely reactive.

4. Implement Real-Time Controls for Prompts and Data Flows

Adversaries embed malicious instructions in content the agent is designed to retrieve and trust: a document, a web page, a tool response. The agent then executes them because it cannot distinguish between legitimate context and weaponized input.

The threat surface widens with every external source an agent touches.

- Structural isolation between retrieved content and system instructions closes the most direct path.

- Inline classifiers catch injection attempts before they reach the reasoning layer.

- Output validation before the agent acts on tool call results prevents the second stage of the attack, where a manipulated agent calls a tool it was never meant to access.

At scale, these controls need to operate in real time. A detection that fires after the action completes is forensics, not defense.

5. Standardize Policies Across Agents and Workflows

Most enterprise environments have dozens of agents across teams. They’re built on different frameworks, with security configurations that are not consistent with each other. Attackers look for the weakest node. In a multi-agent environment, that is usually the one nobody is watching.

Standardization matters here because inconsistency creates exploitable seams. An agent with looser tool access controls, a different logging configuration, or no defined escalation behavior is an entry point. And in a pipeline where agents delegate to other agents, a compromised node can propagate malicious instructions upstream without triggering a single alert.

A centralized agent registry, consistent permission models, and a defined review process for new agent capabilities before production are what make cross-agent monitoring meaningful.

Agentic AI Architecture: Core Design Challenges

Discovering and Mapping Agentic AI Usage Across the Enterprise

Identifying Shadow Agents and Unapproved AI Tools

More than 80% of technical teams have already moved past planning into active testing or production deployments. But only 14% of those agents are going live with full security and IT approval according to Gravitee. The rest are running somewhere in the enterprise without visibility, without scoped permissions, and without audit logging.

For security teams, every unregistered agent is an identity operating inside the environment with tool access and data reach that no one has reviewed. Agents appear through developer experimentation, third-party SaaS integrations, and business units moving faster than procurement. Each one represents an attack surface that doesn't appear on any inventory.

Mapping Agent Workflows Across Applications and Systems

An agent's risk profile is determined by what it connects to. A single agent may touch:

- An enterprise CRM

- The organization’s code repository

- Internal databases

- An external API

That’s one identity and one reasoning process linking four attack surfaces. Mapping agent workflows means tracing every integration, every tool call path, and every data source an agent can reach, as workflows evolve and new tools are added. Without that map, security teams are responding to incidents they cannot reconstruct.

Detecting Anomalous Agent Behavior Across Users and Sessions

Agents that operate across multiple users and sessions accumulate patterns that should be predictable. That predictability is the baseline security teams need to detect when things have gone wrong. An agent that suddenly queries data outside its normal scope, or calls tools it has never used in prior sessions, it’s not necessarily malfunctioning. It may be operating exactly as an attacker wants it to.

Detection requires per-agent behavioral baselines, cross-session correlation, and alerting that fires on deviation rather than on known-bad signatures. Signature-based detection does not work against prompt injection and agent manipulation, because the agent's actions are technically legitimate. The only signal is behavior that doesn't fit.

How Attackers Exploit Data in Agentic AI Workflows

Securing Agentic AI Deployment in Enterprise Environments

Enforce Identity-Aware Access Controls

Agents are non-human identities operating inside enterprise environments with API tokens, service accounts, and application credentials. If they’re operating under a broadly-scoped service identity, they can become credentialed proxies that an attacker can manipulate without ever touching the underlying system directly.

Identity-aware access controls for agentic AI need to go beyond role assignment. They need to reflect what an agent is actually doing at runtime:

- Which tools it is calling

- Which data sources it is querying

- Whether that activity matches its defined task scope

Static permission models applied at deployment drift out of alignment as agent workflows evolve. The controls need to keep pace.

Secure and Terminate Compromised AI Sessions

An agent session that has been manipulated through prompt injection does not look compromised from the outside. The agent continues to operate using legitimate credentials, calling authorized tools, producing outputs that follow expected formats. The attack succeeds precisely because nothing breaks in an obvious way.

Securing AI sessions means establishing what normal looks like for each agent across its full interaction history, and detecting deviations from that baseline in real time. Terminating a compromised session requires the ability to act on that detection instantly, before the agent completes the action it has been redirected toward.

Monitoring Runtime Agent Behavior and Actions

Monitoring agent outputs is not the same as monitoring agent behavior. An agent that returns a harmful response is a clear problem. An agent that has been subtly redirected toward a goal it was never meant to pursue is harder to catch. This is especially true if it is still returning responses that look reasonable on the surface.

Runtime monitoring needs to trace the full reasoning chain:

- Which tools were called, in what sequence, and with what inputs

- Whether the agent's behavior across the session is consistent with its intended purpose

This is the visibility gap that makes agentic AI testing so different. It’s also why testing needs to cover single-turn, multi-turn, and bespoke workflow scenarios in order for a monitoring program to be meaningful. You cannot detect fragile intent at runtime if you have never mapped what it looks like under pressure.

Limiting Autonomous Execution Based on Risk Levels

Agentic AI is built for full automation, but not every operation should complete without a human seeing it first. The architecture needs a risk classification layer that sits between the agent's decision and the action it wants to take.

High-risk operations should require explicit authorization before the agent proceeds:

- Writing to production systems

- Executing code outside a sandbox

- Initiating financial transactions

Lower-risk operations can run autonomously within defined parameters. The line between those categories shifts depending on the systems it is connected to and the context of the current task. The classification needs to be dynamic, and the authorization gates need to be enforced at the infrastructure level. The prompt level is already too late.

Security and Control Tools for Agentic AI Workflows

Agent Observability and Monitoring Platforms

Observability platforms built for agentic AI trace the full execution chain: tool calls, memory state, decision points, and behavioral patterns. Evaluating inputs and outputs is simply not enough.

For security teams, this is the foundation everything else depends on. You cannot detect a compromised agent, investigate an incident, or establish a behavioral baseline without visibility into what the agent did and why.

Runtime Threat Detection and Prompt Defense Platforms

Runtime threat detection platforms operate inline, analyzing inputs before they reach the agent's reasoning layer and flagging outputs before the agent acts on them.

To be effective, this needs to go beyond content classification, because what really matters is adversarial intent. An input that looks benign in isolation can be a staged attack when viewed in the context of what the agent has already been told. Effective platforms account for that, and evaluate each interaction against the full session.

AI Attack Surface and Extension Security Platforms

Every tool an agent can call, every API it connects to, and every MCP server in its environment is part of the attack surface.

Extensions and third-party integrations are particularly high-risk. They expand agent capability rapidly, often without the security review that internal tooling receives. A malicious or compromised extension can manipulate agent behavior or escalate permissions without triggering any conventional security alert. Platforms in this category map the full extension and integration landscape, identify overprivileged connections, and surface attack paths that only become visible when you look at the agent's environment as a whole.

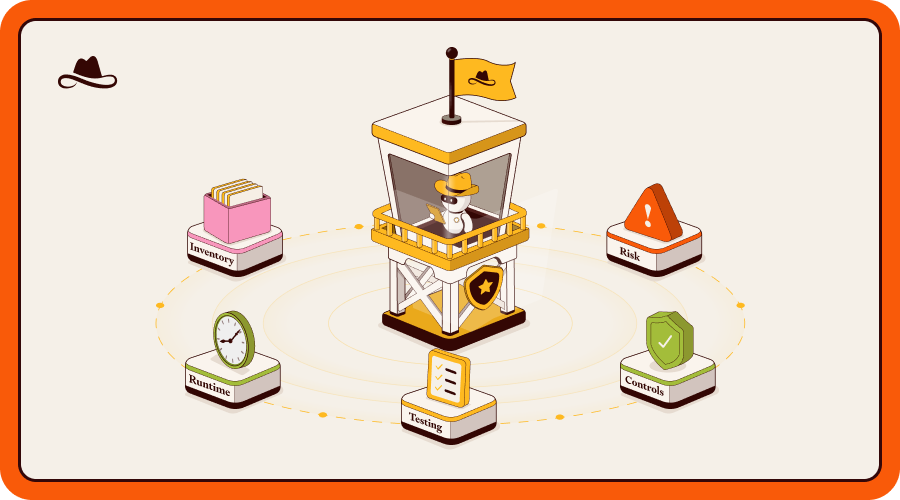

AI Security Posture Management Solutions

An agent that passed a security review at deployment is not necessarily secure six months later. Models update, tool connections change, and new agents get spun up by teams that never engaged security. The overall posture drifts without anyone making a deliberate decision to change it.

AI Security Posture Management solutions provide continuous assessment across the full agent inventory:

- Tracking configuration drift.

- Identifying agents operating outside defined policy.

- Surfacing posture gaps before they become incidents.

For enterprises running agents at scale, this is what makes security measurable rather than assumed.

How Lasso Provides Visibility and Control for Agentic AI

Most enterprises don't have a complete picture of the agents running in their environment. They know about the ones engineering built and deployed through a formal process.

They don't know about the ones built on low-code platforms by business teams, the ones that shipped without a security review, or the ones whose behavior changed when the underlying model was updated without anyone touching the code.

Discover

Lasso starts by solving that. The platform builds a full inventory of every agent and AI application across code and cloud, mapping each one's dependencies: the model it runs on, the tools it connects to, the APIs it calls, the data sources it can

reach. That inventory is the foundation. Without it, there is no baseline to defend and no way to prioritize what needs to be fixed first.

Assess

With the inventory in place, Lasso builds a dependency graph for each application, mapping every AI component and how it connects: the underlying model, databases, MCP servers, external APIs, and any other integrated services. This is the AI Security Posture Management (AISPM) layer, analyzing configurations before any dynamic testing begins. This stage identifies insecure design patterns, overprivileged connections, and attack paths that exist by construction rather than by exploitation.

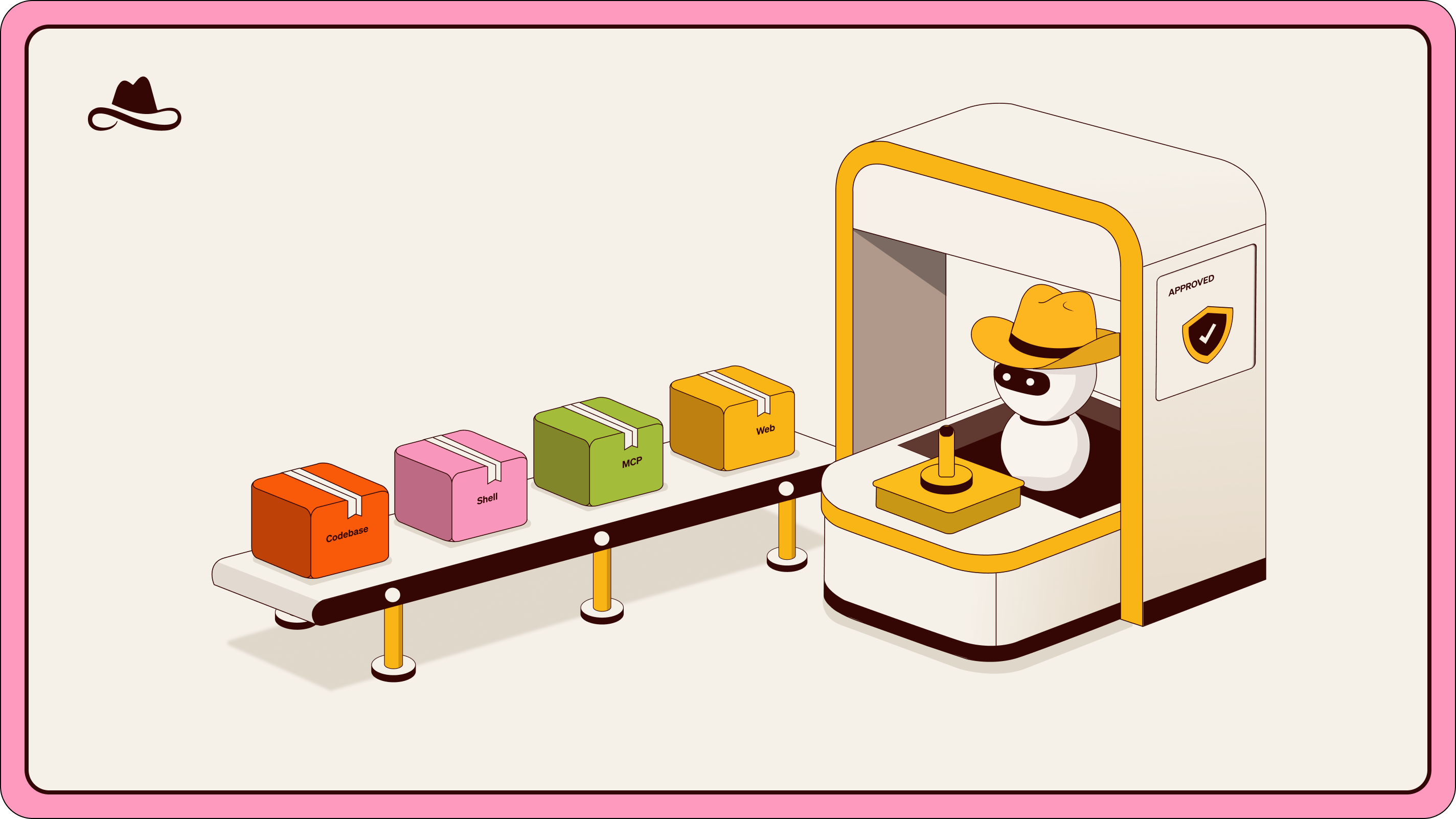

From there, Lasso runs automated red teaming across three layers:

- Single-turn testing covers the prompt and response boundary, running adversarial payloads across a library of obfuscation techniques mapped to the OWASP Top 10 for Agentic Applications.

- Multi-turn testing goes further, using offensive agents to probe for fragile intent through sustained conversation. It finds the specific conditions under which an agent can be redirected toward a goal it was never meant to pursue.

- Bespoke testing is built around the actual agent: its tools, its access permissions, and its role in a real business workflow. That last layer is where goal hijacking and cross-system exploitation get surfaced, because those attacks only become visible when you test the agent as it actually operates.

Protect

Where it finds vulnerabilities, Lasso builds adaptive guardrails automatically, translating red teaming findings into runtime policy without requiring security teams to write rules from scratch. Runtime protection then runs continuously, detecting and responding to threats as agents operate in production.

Detect and Respond

Runtime protection runs continuously, monitoring agent behavior against established baselines and responding to threats as agents operate in production. This surfaces the activity that indicates an agent has been manipulated or has drifted from its intended purpose, and to act on it before the damage is done.

The loop from discovery to assessment to protection to detection is what makes security posture manageable for systems that don't stand still.

Conclusion

Agentic AI is not coming. It is already running inside enterprise environments, connected to production systems, operating under identities that carry significant access, and making decisions that can't always be reversed.

The best practices in this article are a starting point: clear task boundaries, least-privilege access, human oversight for high-risk operations, continuous behavioral monitoring, and security that covers the full agent lifecycle from code to cloud. None of them are exotic. Most enterprises skip them because deployment moved faster than the security conversation did.

FAQs

What are the most important agentic AI best practices?

The most critical practices center on the fact that agents act, not just respond. That means:

- Defining explicit task boundaries enforced at the infrastructure level,

- applying least-privilege access to every tool and data source an agent can reach,

- and requiring human authorization for irreversible operations.

Beyond those fundamentals, continuous behavioral monitoring and automated red teaming are key. Without them, you may have a security posture that looks good at deployment, but doesn’t hold over time.

Discover Lasso’s solution for AI Agents Security.

How do enterprises secure autonomous AI agents against manipulation and misuse?

The starting point is visibility. You cannot secure agents you don't know exist, and most enterprises have agents running across engineering, operations, and business teams without a unified inventory.

From there, security requires controls that match how agents actually work:

- identity-aware access scoped to specific tasks.

- Behavioral baselines that surface anomalous activity across sessions.

- Human checkpoints before high-risk actions complete.

Prompt injection is the most common manipulation vector. Structural isolation between retrieved content and system instructions, combined with inline threat detection, is what prevents a weaponized input from becoming an executed action.

What security risks are unique to agentic AI?

Several agentic AI risks are novel enough to warrant their own security discipline:

- Prompt injection via external content allows attackers to embed malicious instructions inside documents, API responses, or data fields that the agent is designed to trust.

- Fragile intent describes the specific conditions under which an agent can be persuaded, through sustained conversation. This only surfaces through multi-turn adversarial testing, not single-prompt checks.

- In multi-agent pipelines, a compromised node can propagate malicious instructions upstream without triggering any conventional alert.

And because agents operate under service identities with broad access, a successful manipulation doesn't require breaching a system directly. The agent is already a credentialed proxy inside it.

How does Lasso improve visibility across agentic AI workflows?

Lasso builds a complete inventory of every agent and AI application across code and cloud, mapping dependencies at the level that matters for security: which model it runs on, which tools it connects to, which APIs it calls, and which data sources it can reach.

That inventory spans agents built by engineering teams in code repositories and agents deployed through cloud platforms or low-code builders by business teams. From that foundation, Lasso runs continuous red teaming and runtime monitoring, so visibility isn't a point-in-time snapshot but an ongoing picture of what each agent is doing and whether that matches what it was designed to do.

Learn more about Lasso’s Automated AI Red Teaming solution.

Can Lasso secure multi-agent pipelines and complex agentic workflows?

Yes. Multi-agent environments introduce risks that single-agent security approaches don't address. When agents interact with each other, new attack paths open up that are invisible when you test each agent in isolation:

- Implicit trust between agents that an attacker can exploit to pass manipulated outputs upstream

- A compromised node propagating malicious instructions across the pipeline without triggering a conventional alert

- Lateral movement across connected systems through tool calls that look legitimate from the outside

- Goal hijacking at the pipeline level, where the final action bears no resemblance to the original instruction

Lasso's bespoke red teaming is built around the actual agent in its actual environment, including its role within a wider pipeline. Runtime monitoring tracks behavior across the full pipeline rather than at individual agent boundaries, so anomalous activity doesn't disappear into the space between agents..

.avif)

.png)