From Content Security to Intent Security

AI agents are evolving from generating content to executing real actions, approving payments, modifying permissions, and triggering workflows across enterprise systems.

But security controls haven’t evolved at the same pace. Most existing tools weren’t designed to govern autonomous actions or determine whether an agent should take a specific action in a given context, at that exact moment.

Download the report to learn:

- Why traditional security controls break down once AI agents operate across tools and workflows

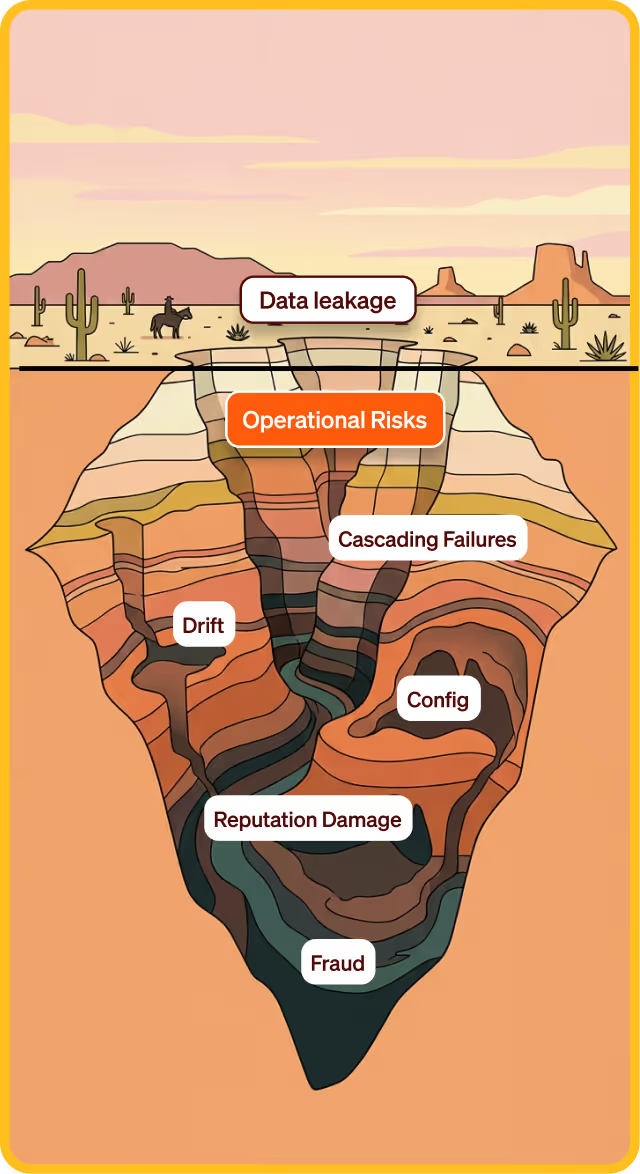

- The three critical security gaps exposing organizations to a new class of agentic risk

- Why security must shift from content moderation to behavior- and context-aware controls

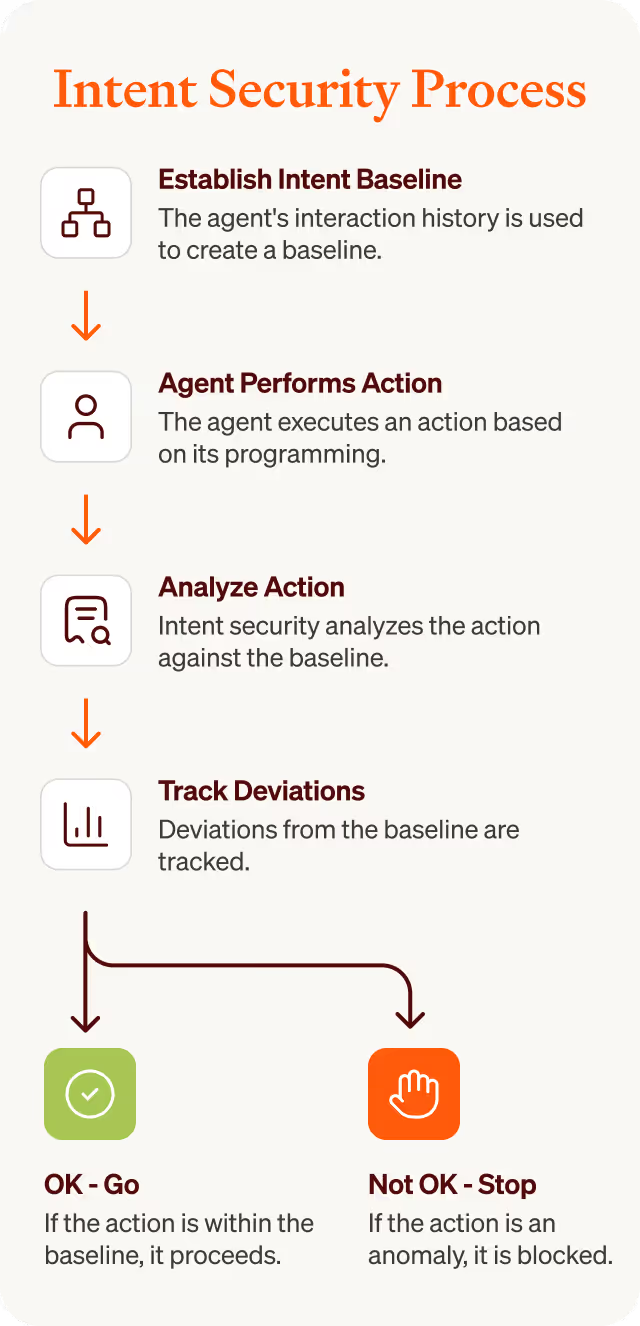

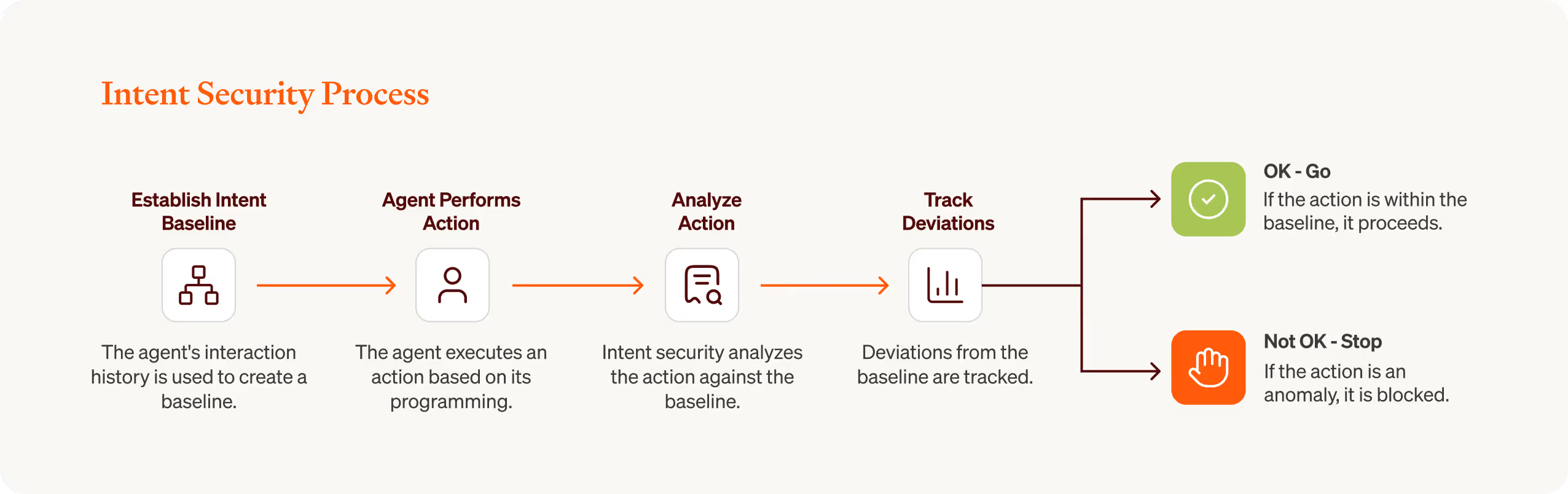

- How behavioral baselines and intent alignment help detect and stop unauthorized agent actions in real time

The 3 Agentic Security Gaps and How to Address Them

From Designed State to Emergent Context

AI agents generate dynamic, unbounded context shaped by conversation history, retrieved data, tool outputs, and prior reasoning. Their state is emergent and continuously influences future decisions.

From Communication to Action

Modern agents are empowered to execute tool calls. They access APIs, modify database records, send emails, and trigger autonomous workflows across external systems.

From Static Code to Dynamic Logic

Agent behavior is stochastic. It is influenced by fixed weights defining capability, variable context like prompts and RAG, and stochastic sampling parameters.