The OWASP AI Red Teaming Landscape: Why Securing AI Requires a New Security Stack

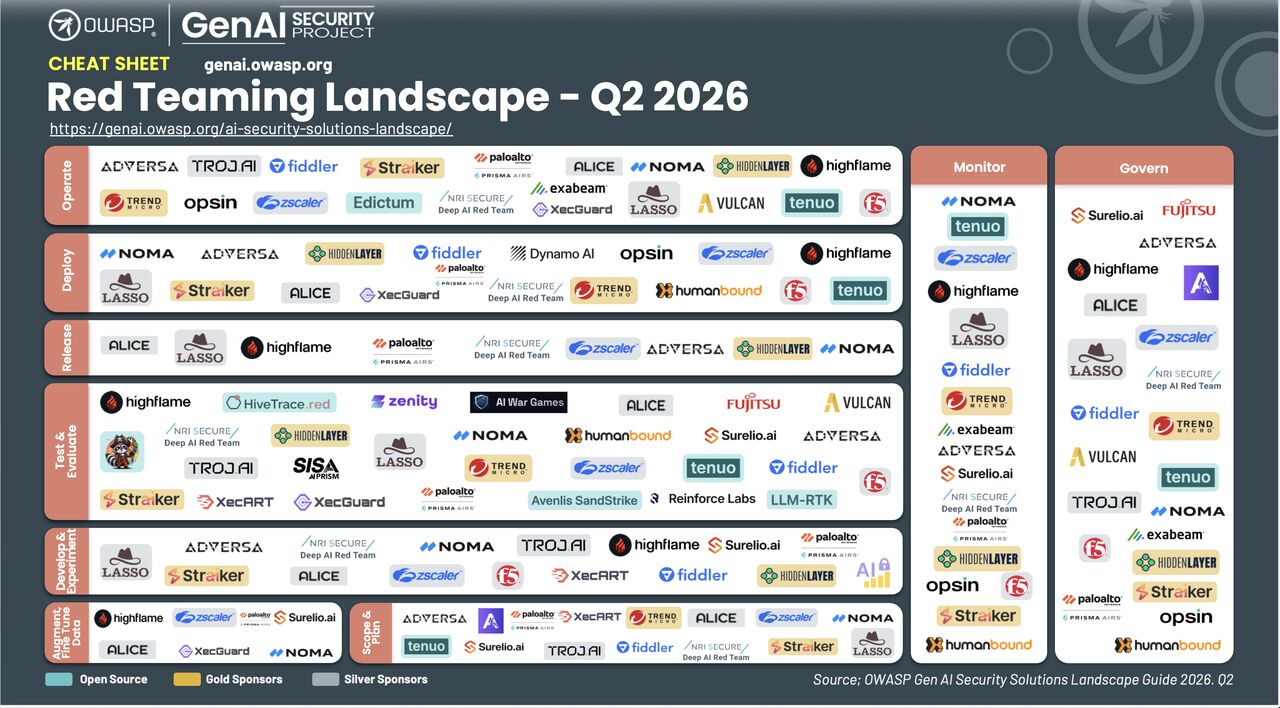

The latest Agentic AI Red Teaming Landscape (Q2 2026) from OWASP is not just another framework, it is a reflection of a fundamental shift in how security must be approached in the age of generative and agentic AI.

For the first time, we are seeing a structured attempt to formalize what many security teams are already experiencing: AI systems cannot be secured using traditional application security paradigms. The reason is simple AI systems are not static software systems. They are dynamic, probabilistic, and deeply interconnected with data, tools, and external environments.

This shift introduces a new class of vulnerabilities that are not tied to code in the traditional sense, but to behavior. Prompt injection, jailbreaks, tool poisoning, memory manipulation, data poisoning, hallucinations, and agent misuse are not edge cases; they are intrinsic properties of how these systems operate. As a result, security must move from static validation to continuous adversarial interaction.

From Deterministic to Behavioral Attack Surfaces

The Expansion of the Attack Surface in Agentic AI

The complexity increases significantly with the rise of agentic AI systems. These systems are no longer passive responders; they are active participants in workflows. They execute actions, call APIs, chain decisions, and operate across trust boundaries.

This creates entirely new categories of agentic attacks. Instead of simply manipulating output, attackers can influence decision-making processes, redirect workflows, or exploit tool integrations.

The OWASP framework highlights scenarios such as tool poisoning (injecting malicious instructions through tool responses), cross-agent prompt injection (where one agent manipulates another via shared context), tool misuse, privilege escalation within agent chains, cross-tenant data exposure, and protocol-level manipulation. These are not theoretical risks, they emerge naturally from how agents are designed to operate.

The implication is clear: agentic security must evolve from protecting outputs to protecting behavioral execution paths, or as we see it in Lasso, the intent security aspects of why, how, and what your agents are doing.

The OWASP Lifecycle Model as a Security Blueprint

One of the most important contributions of the OWASP AI Red Teaming Landscape is its lifecycle model. Rather than treating security as a gate before production, it frames it as a continuous process that spans planning, development, deployment, and operation.

Scope and Planning as a Security Function

Security in agentic AI systems begins before a single model is deployed. The scope and planning phase focuses on identifying assets, mapping attack surfaces, and defining adversarial scenarios. This is where organizations attempt to understand what they are actually securing.

What is notable in the OWASP approach is the emphasis on integrating red and blue perspectives at this stage.

Threat modeling is no longer a theoretical exercise, it is directly tied to simulated adversarial behavior. For agentic systems, this means explicitly modeling attacks where a malicious tool outputs redirect agent behavior, or where a compromised sub-agent propagates harm across an orchestration chain.

Attack paths are defined not just as possibilities, but as executable scenarios that can later be tested and validated.

This early integration is critical, because it establishes traceability between identified risks and implemented controls. Without this linkage, security efforts tend to become fragmented, with no clear connection between vulnerabilities discovered in testing and protections deployed in production.

Data and Model Adaptation as a Primary Risk Vector

A significant portion of AI risk originates not from the model architecture itself, but from the data it is trained on and the way it is adapted over time. OWASP dedicates an entire phase to this, emphasizing risks such as data poisoning, malicious artifacts, and bias or toxicity amplification. In agentic systems, this also includes poisoned tool descriptions or retrieval-augmented generation (RAG) content that causes agents to behave unexpectedly at runtime.

What makes this phase particularly challenging is that these risks are often silent. A poisoned dataset does not necessarily cause immediate failure; instead, it subtly alters model behavior in ways that may only become visible under specific conditions. This makes traditional validation insufficient.

Red teaming in this context involves actively introducing adversarial data and observing how the system responds. Blue teaming focuses on ensuring data integrity, tracking provenance, and enforcing policies around data usage. The interaction between the two creates a feedback loop where data-related vulnerabilities are not only identified, but continuously monitored and mitigated.

Development and Pre-Production Testing

During development, the focus shifts to model behavior under controlled conditions. This is where techniques such as prompt injection, jailbreak testing, and agent logic manipulation are applied systematically. For agentic systems, this specifically includes testing tool call sequences, validating agent decision boundaries, and red teaming multi-agent orchestration for privilege escalation paths.

The OWASP model highlights the importance of combining these adversarial techniques with traditional security practices. Code scanning, plugin validation, and infrastructure security still play a role, but they are no longer sufficient on their own. They must be augmented with behavior-driven testing that reflects how agentic AI systems are actually used and abused.

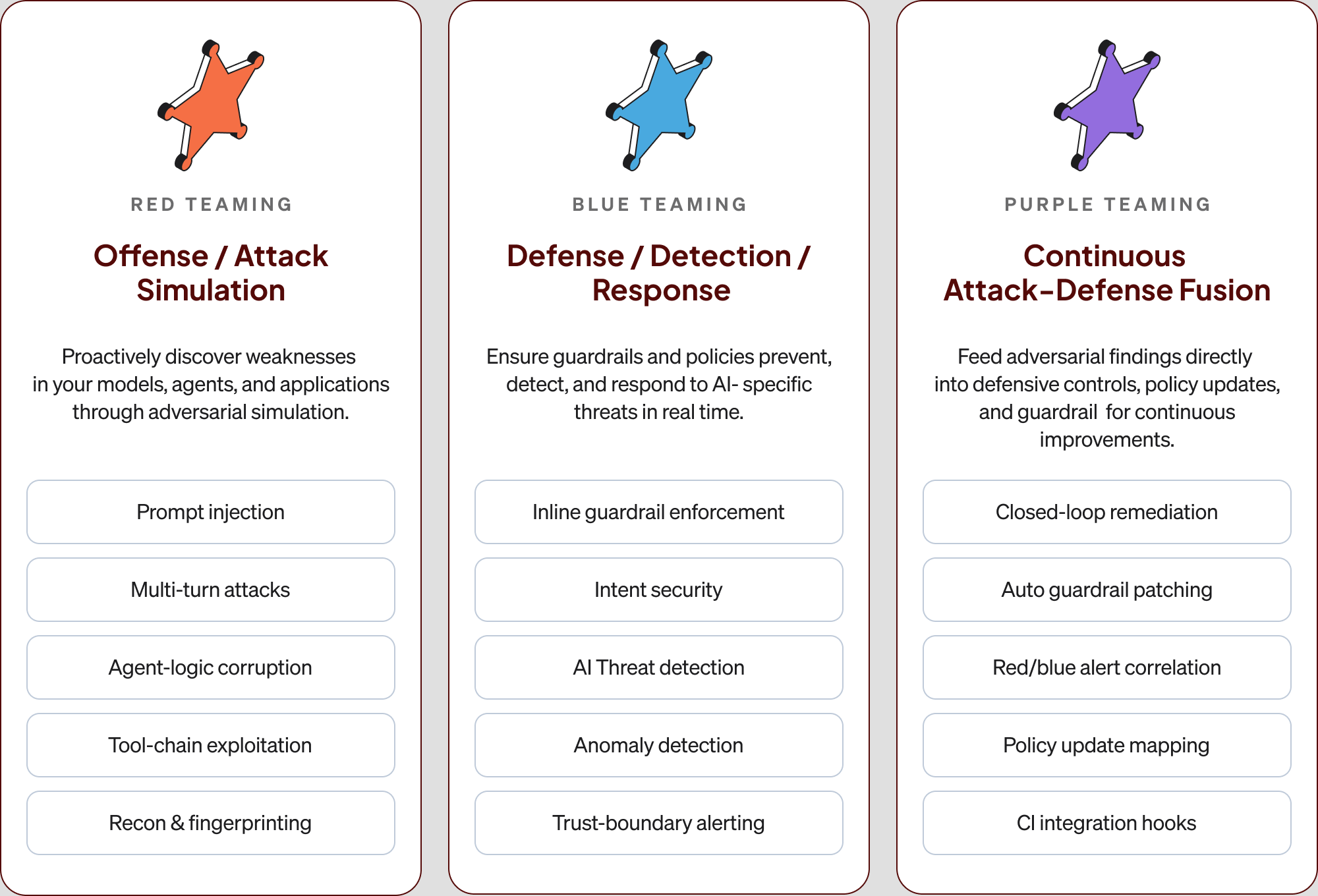

This phase also introduces the concept of shared environments where red and blue teams can operate simultaneously. Instead of sequential testing and fixing, there is a continuous interaction where attacks and defenses evolve together. This is a key step toward achieving the “purple teaming” model that OWASP promotes.

Testing, Assessments, and the Measurement of Risk

One of the most technically demanding aspects of agentic AI security is measuring resilience. Unlike traditional systems, where pass/fail criteria can often be clearly defined, AI systems require probabilistic evaluation.

OWASP addresses this by introducing structured adversarial testing methodologies. Multi-turn attacks, prompt chaining, and protocol-level exploits are used to simulate realistic agentic threat scenarios. These are not single inputs, but sequences of interactions designed to push the system into failure states.

At the same time, defensive mechanisms are evaluated under both normal and adversarial conditions. The goal is not just to detect vulnerabilities, but to understand how effective the defenses are in practice. This leads to the concept of success thresholds and KPI-driven evaluation, where security is quantified rather than assumed.

Deployment and Runtime Exposure

Deployment marks the transition from controlled environments to real-world conditions, where unpredictability increases significantly. The OWASP framework emphasizes that this is not the end of the security process, but the beginning of a new phase.

At runtime, systems are exposed to a wide range of inputs, including malicious ones. Attackers can exploit tool integrations, manipulate agent workflows, or attempt to escalate privileges. These attacks often occur in ways that were not anticipated during testing.

To address this, OWASP highlights the need for runtime protections such as AI firewalls, policy enforcement mechanisms, and real-time monitoring. These controls must operate at the same speed as the system itself, which introduces significant technical challenges.

Continuous Operation, Monitoring, and Governance

The final stages of the lifecycle focus on maintaining security over time. AI systems do not remain static after deployment; they evolve through updates, new data, and changing usage patterns. In agentic systems, new tools, integrations, and orchestration patterns continuously expand the attack surface.

This creates a moving target for security. Threats evolve, attack techniques adapt, and previously secure configurations may become vulnerable. OWASP addresses this through continuous red teaming, where autonomous agents and fuzzing techniques are used to probe the system on an ongoing basis.

At the same time, monitoring systems collect telemetry and analyze behavior to detect anomalies. The integration of these functions creates a closed-loop system where attacks inform defenses, and defenses adapt based on observed threats.

Governance ties everything together by ensuring that security is measurable, auditable, and aligned with organizational requirements. This includes maintaining audit trails, generating executive-level reports, and ensuring compliance with emerging regulations.

Bridging the Gap Between Theory and Implementation

While the OWASP framework provides a comprehensive blueprint, implementing it in practice is far from trivial. Many organizations struggle because their tooling and processes are not designed for continuous, behavior-driven security.

A common issue is fragmentation. Red teaming, monitoring, and policy enforcement are often handled by separate systems that do not share context. This creates delays between vulnerability discovery and mitigation, during which systems remain exposed.

Another challenge is the lack of real-time protection. Many solutions focus on identifying issues during testing, but do not provide mechanisms to prevent exploitation in production. This creates a false sense of security, where systems appear validated but remain vulnerable under real-world conditions.

Perhaps the most significant gap is the absence of a true feedback loop. Without continuous integration between attack simulation and defense mechanisms, organizations are unable to adapt to evolving threats effectively.

Lasso as an Implementation of the OWASP Vision

Lasso’s architecture is designed around the same principles outlined in the OWASP framework, but with a focus on operationalizing them in real environments.

As enterprises are not confident in their knowledge of the application’s refusal space, and only have tested their inline guardrails and runtime protection, security teams struggle with unforeseen risks in agentic applications.

At its core, Lasso treats AI security as a continuous system rather than a set of isolated functions. Red teaming is not limited to pre-production testing; it is an ongoing process that simulates adversarial behavior across the lifecycle. This includes prompt injection, jailbreak attempts, and agent-level attacks that reflect real-world usage patterns.

Runtime Protection - Know You’re Protected

What differentiates Lasso is its ability to extend this into runtime. Operating at the proxy, API, or AI gateway layer, it is positioned directly in the execution path of AI systems. This allows it to detect and block attacks in real time, with detection times measured in milliseconds and response mechanisms that provide immediate context and remediation guidance.

This runtime capability is critical, because it closes the gap between testing and production. Instead of relying on assumptions about system behavior, Lasso continuously validates and enforces security policies as the system operates.

Equally important is the feedback loop between offensive and defensive functions. Every detected attack contributes to improving the system’s defenses. Guardrails are updated, policies are refined, and detection mechanisms are tuned based on real adversarial activity. This creates a dynamic security posture that evolves alongside the system itself.

In effect, Lasso implements the “purple teaming” model not as a concept, but as a core architectural principle. Offense and defense are not separate functions, they are integrated into a single continuous loop.

What risks you can cover with Lasso’s Automated Agentic Red Teaming

Security risks

Agents operate with real permissions, tool access, and system integrations. When their intent is manipulated through tool poisoning, cross-agent injection, or memory tampering, the result is not a bad response; it’s unauthorized data access, privilege escalation, or compromised infrastructure.

Reputational risks

AI applications are non-deterministic. Customer-facing apps can respond in ways that are off-brand, harmful, or non-compliant.

Economic risks

Agents act on behalf of your organization. If manipulated into making commitments, approvals, or promises outside their intended scope, the business is bound to the consequences.

Offensive and Defensive Security Loop

Lasso’s security approach is built specifically for agentic AI, and operates as a continuous, closed loop where offense and defense operate as a single system with:

A Turning Point for AI Security

The OWASP AI Red Teaming Landscape represents more than a framework, it signals a turning point in how the industry approaches AI security.

As AI systems become more capable and more deeply integrated into business operations, the risks associated with them increase accordingly. These risks are not static, and they cannot be addressed with static solutions.

What is required is a shift toward lifecycle-driven, continuous security models that account for the dynamic nature of AI. This includes not only identifying vulnerabilities, but actively simulating adversarial behavior, enforcing protections in real time, and continuously adapting based on observed threats.

The combination of structured frameworks like OWASP and operational platforms like Lasso provides a path forward. One defines the model; the other makes it executable.

For organizations building and deploying AI systems, the challenge is no longer understanding the risks. It is implementing the systems required to manage them effectively.

And in that sense, AI security is no longer just a security problem, it is an architectural one.

FAQs

.avif)

.png)

.png)