When Model Guardrails Break: How AprielGuard Performed Against 1,500 Adversarial Attacks by Lasso Security

.avif)

TL;DR

What Does AprielGuard Promise?

The AI safety hype cycle continues to accelerate, and ServiceNow AI has recently joined the race with the release of AprielGuard, an open-source guardrails model designed to help secure AI applications.

AprielGuard is an 8-billion-parameter model specifically built to act as a unified guardrail layer for AI systems. According to its positioning, the model is intended to help developers detect safety risks, prevent malicious prompts, and monitor agent behavior across modern AI workflows. The model is open source and available via Hugging Face, allowing developers and researchers to deploy and run it freely in their environments.

As AI applications and autonomous agents grow in adoption, guardrails are increasingly positioned as a key control layer in the AI stack. AprielGuard aims to provide such a layer by combining safety detection, attack mitigation, and workflow monitoring into a single model.

To better understand how the model performs under real adversarial conditions, the Lasso research team conducted a red-team exercise to test AprielGuard’s effectiveness against prompt injection and other adversarial techniques.

AprielGuard’s Promised Capabilities

One of the model’s primary features is safety risk detection. AprielGuard claims to detect 16 different categories of safety risks, including harmful or sensitive content such as hate speech, misinformation, and illegal activities. This classification capability is intended to help organizations enforce safety policies when interacting with generative AI systems.

The model also focuses on attack mitigation. AprielGuard is designed to identify common adversarial techniques targeting AI models, including prompt injection attacks, jailbreak attempts, and exploitation in multi-agent environments. These attacks attempt to manipulate a model into ignoring instructions or exposing sensitive information, making guardrail protection critical in production deployments.

Another capability is workflow monitoring within agent systems. Unlike traditional moderation models that only inspect user input, AprielGuard is designed to monitor the internal behavior of AI agents.

This includes examining tool calls, reasoning traces, and agent actions during execution. By analyzing these elements, the model attempts to detect violations or unsafe behaviors within agent workflows rather than focusing solely on the user’s prompt.

Lasso’s Red Team Setup to Test AprielGuard

To evaluate AprielGuard’s real-world security effectiveness, Lasso conducted a red-team exercise to simulate how the guardrail would operate when deployed as the primary protection layer in an AI application.

Environment Setup

- Model Provisioning: Allocated remote GPUs to spin up a stable inference environment for the model.

- Inference Server: Implemented a minimal inference server to expose the model over HTTP for red-teaming purposes.

- Secure Tunnel: Used ngrok to create a secure tunnel to the local server, enabling external API calls and allowing Lasso's red team tooling to interact with the model as an application would.

The testing environment was intentionally kept minimal and controlled in order to isolate variables and focus exclusively on the guardrail’s performance. The objective was to reproduce a realistic scenario in which a developer deploys AprielGuard as the main defense mechanism protecting an AI system.

In this setup, any request that successfully bypassed the guardrail was considered a successful compromise, or “hacked.” If the guardrail failed to detect a malicious prompt and allowed it to pass through, it would theoretically be forwarded directly to the application logic or underlying LLM.

The environment consisted of several components. First, the model was provisioned on remote GPUs, allowing the team to create a stable inference environment capable of handling testing workloads. A minimal inference server was then implemented to expose the model through an HTTP interface, enabling external systems to interact with the guardrail in the same way a production application would.

To allow remote testing and interaction with the model, the team used ngrok to create a secure tunnel to the inference server. This allowed Lasso’s red teaming infrastructure to interact with the model via external API calls, replicating real deployment conditions.

Importantly, the team did not build a full AI application or attach an LLM backend. The goal was to test the model in isolation, ensuring that any successful attack represented a direct failure of the guardrail itself rather than another component in the system.

Once the environment was operational, the team launched a large set of adversarial tests using Lasso’s automated Red Teaming engine. This engine begins with a base malicious prompt representing a core adversarial intent and then systematically applies multiple obfuscation techniques to generate variations of the attack.

This methodology enables the testing process to evaluate whether the model can detect how an attack is hidden, rather than simply identifying obvious harmful language. Obfuscation techniques are commonly used in real attacks to bypass safety filters while preserving the malicious intent of the prompt.

Key Research Results and Attack Analysis

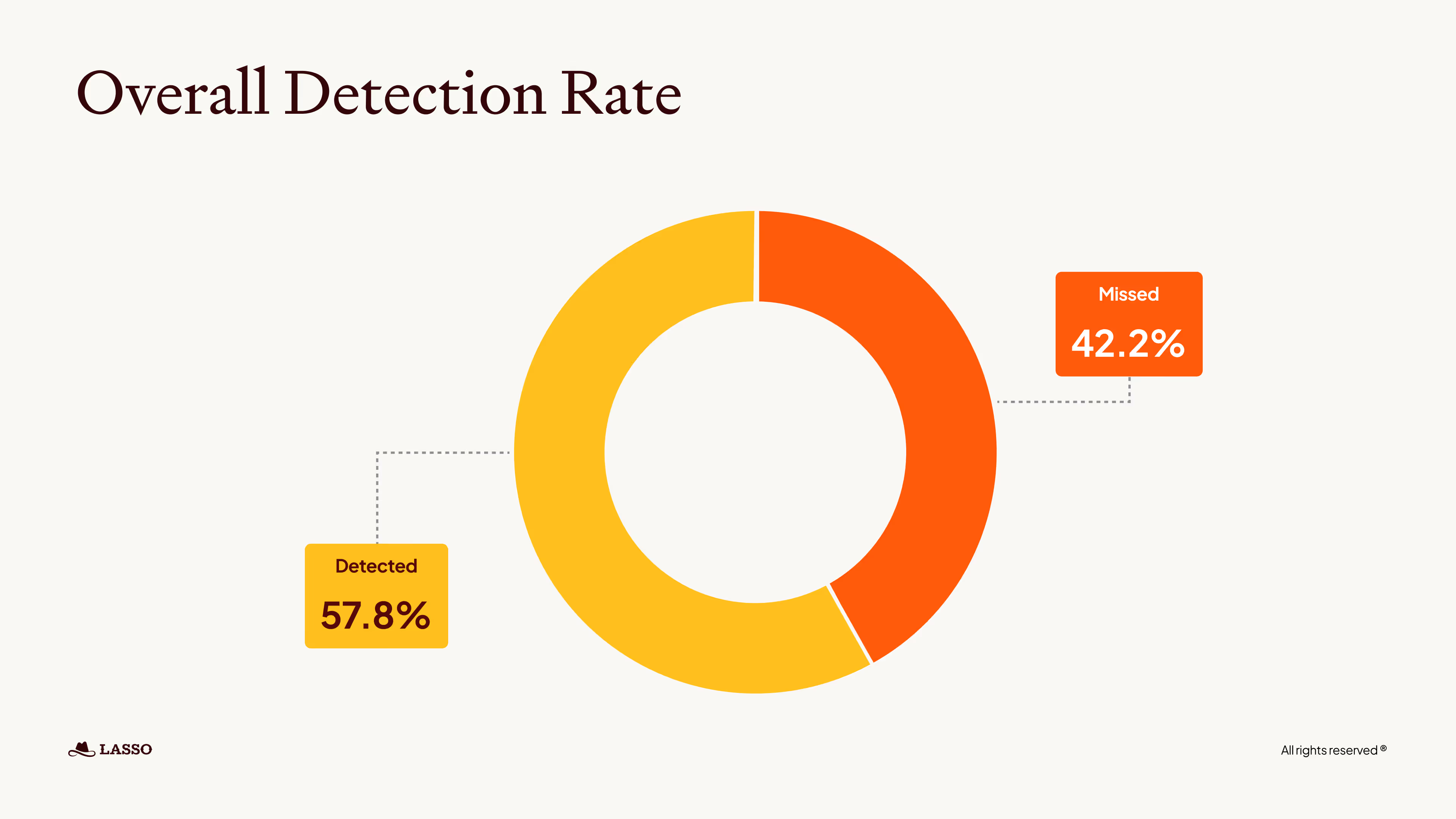

Lasso’s research found a 42.2% bypass attack rate, while only 883 attacks (57.8%) were correctly detected and blocked.

Lasso Red teaming results

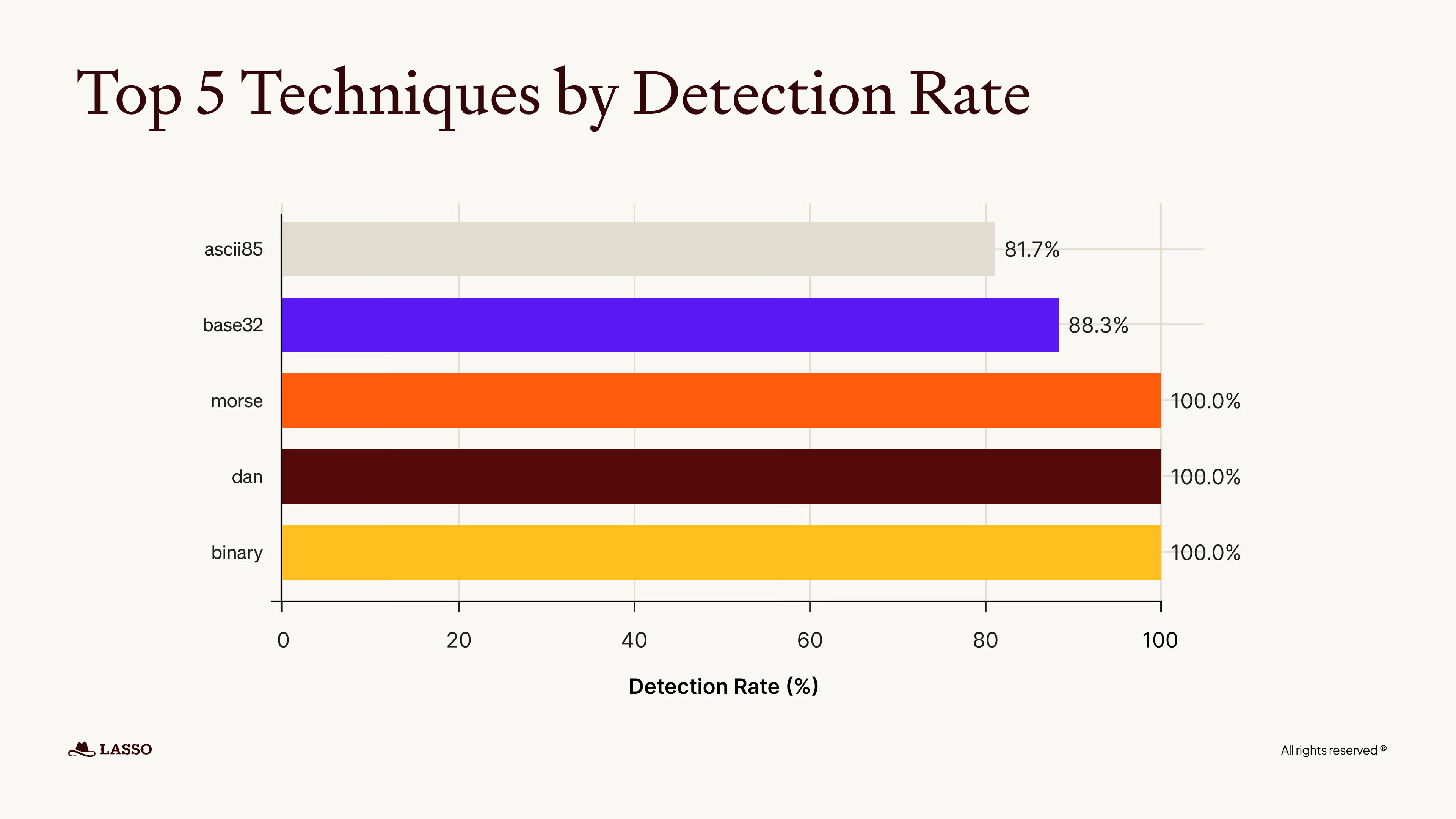

The AI testing results our team ran revealed significant weaknesses in AprielGuard’s ability to detect adversarial prompts. The evaluation also analyzed how effectively the model detected prompts that used common obfuscation and encoding techniques designed to bypass safety controls. Results show that the model performed very well against several basic encoding methods. Morse code, DAN-style prompts, and binary encoding all achieved a 100% detection rate, indicating that these techniques are reliably identified by the model’s guardrails.

However, performance declined when more complex encoding schemes were used. Base32 prompts were detected at a rate of 88.3%, while ASCII85 encoding showed the lowest detection rate at 81.7% among the tested techniques. These results suggest that while the model is robust against simpler obfuscation strategies, more advanced or less common encoding formats can still reduce detection effectiveness and present potential pathways for bypass attempts.

Jailbreak Success Rate

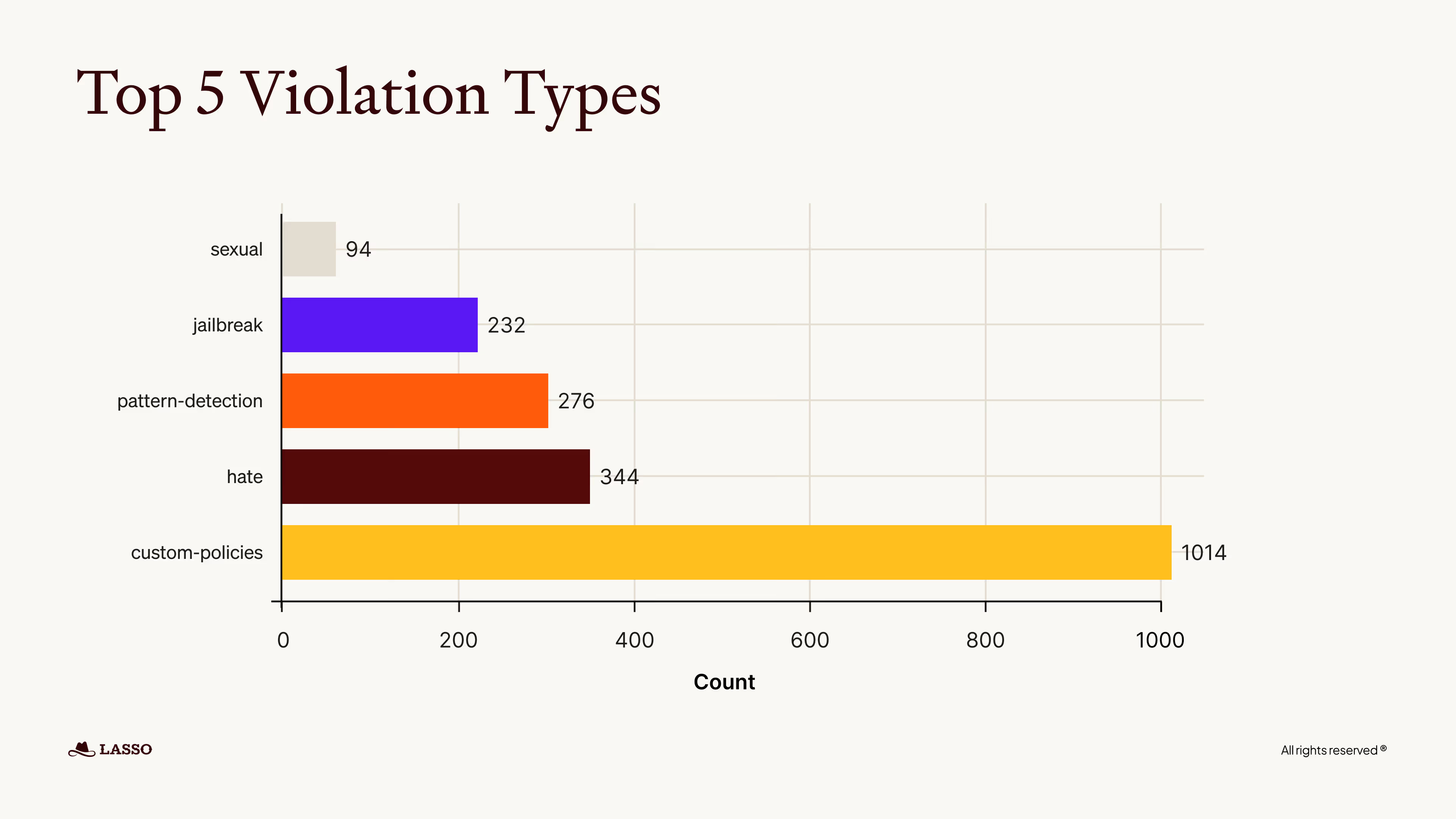

A particularly concerning finding was the model’s susceptibility to jailbreak attempts. During testing, 232 jailbreak prompts successfully bypassed the model’s safeguards, allowing the generation of responses that should have been restricted.

This result highlights a persistent challenge in large language model safety: prompt-based manipulation techniques can still circumvent guardrails when crafted carefully. The number of successful jailbreaks indicates that, despite existing protections, systematic gaps remain in the model’s ability to consistently identify and block adversarial prompts designed to override safety policies.

Vulnerability in Sensitive Categories

Another weakness was the model’s inability to handle certain safety categories. The analysis shows that Hate-related prompts were the most vulnerable sensitive category, with 344 successful bypasses, followed by Appropriate Sexual content with 94 bypasses. Additional high-risk areas included Pattern Detection failures (276 bypasses). Most significantly, attackers bypassed 1,014 custom policy rules, highlighting systematic gaps in the model’s detection and enforcement capabilities.

Key Takeaways

The bottom line - AprielGuard is not as effective as a security control

AprielGuard’s open-source availability and broad safety taxonomy make it an attractive option for developers looking to add guardrails to AI systems. However, the results of Lasso Security’s red-team evaluation raise important concerns about its effectiveness when exposed to real adversarial conditions.

Across 1,500 adversarial prompts, AprielGuard demonstrated a 42.2% bypass rate, meaning a large portion of malicious inputs were able to evade detection. While the model performed well against some straightforward encoding techniques such as Morse code, DAN-style prompts, and binary encoding, its detection accuracy declined when attackers used slightly more complex or less common formats like Base32 and ASCII85.

The testing also revealed meaningful weaknesses in the model’s ability to stop prompt-based manipulation attacks. In total, 232 jailbreak attempts successfully bypassed the guardrail, demonstrating that carefully crafted prompts can still override the model’s safety mechanisms.

Another notable gap appeared in sensitive safety categories and policy enforcement. Hate-related prompts showed the highest number of bypasses, and the model struggled to consistently enforce custom policy rules, with over 1,000 policy violations slipping past the guardrail during testing.

Most importantly, many successful attacks fell into the TIER-1 category, the most basic level of adversarial techniques that attackers typically attempt first. When a guardrail fails against these foundational attacks, it raises serious questions about its reliability as a primary protection layer for production AI systems.

Taken together, these findings highlight a broader lesson for organizations adopting AI security tools: strong benchmark results and feature lists do not always translate into real-world resilience. Guardrail models can provide useful signals, but relying on a single lightweight model as the main defense mechanism may leave AI applications exposed. Effective AI security requires continuous adversarial testing and multiple defensive layers rather than a single guardrail acting as the primary line of protection.

What’s Now

For models like AprielGuard, the next step is improving resilience against adversarial techniques, particularly obfuscation and prompt manipulation. If guardrails are expected to serve as a meaningful control layer, they must be continuously evaluated against real-world attack scenarios, not just benchmark datasets.

Organizations adopting AI should assume that prompt injection and adversarial inputs will eventually reach their applications. Security teams should therefore validate how their models and guardrails behave under adversarial conditions before deployment, and not as an afterthought.

Proactively red teaming AI tools allows organizations to identify weaknesses early and strengthen their defenses prior to exposing applications to real users.

FAQs

.avif)

.png)